Amazon wanted to build a financial product that people actually needed. Something different from what banks and finance apps already offer.

Most financial tools don't help with real problems. People struggle to manage spending, save money, and invest. The market is crowded with apps that make things more confusing, not less. People feel stressed and stuck.

I led the research and design for a new approach. I studied the market, analysed competitors, and validated ideas directly with customers. The goal was to build something that fit into people's lives and actually helped them manage money better.

The pitch was simple: Amazon could give people personalised financial guidance that actually connects to their real spending. Not generic advice. Not another budgeting app they'll ignore.Something integrated into how they already shop and live.The work showed how Amazon could help people feel more in control of their money without making things complicated.

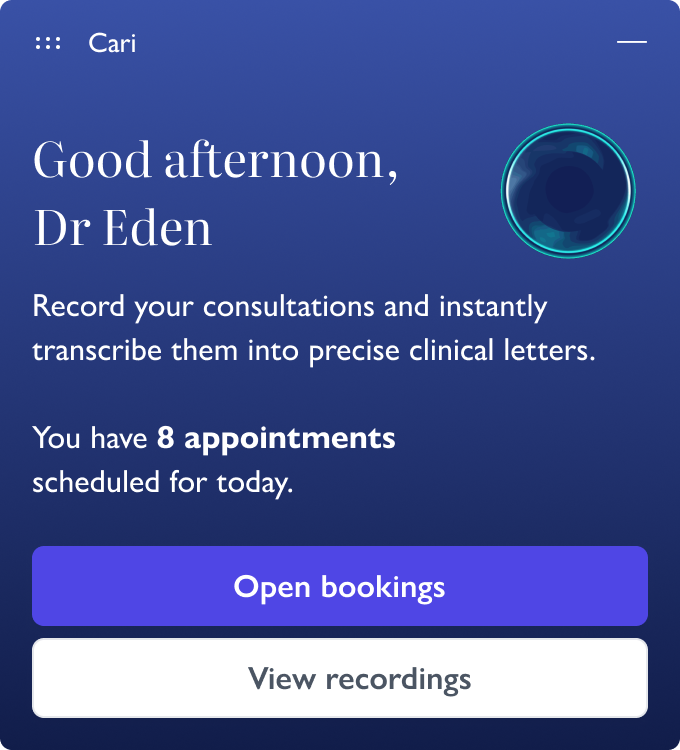

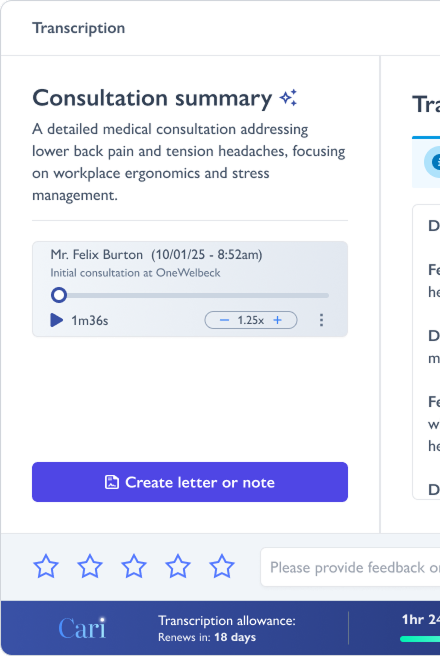

Cari AI is an AI transcription tool built for healthcare clinicians. It converts patient consultations into clinical notes.

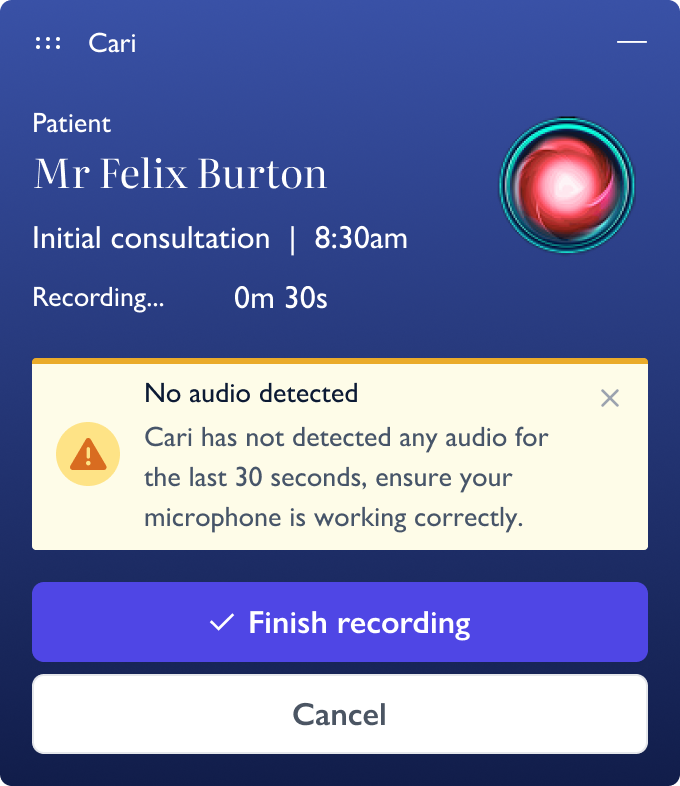

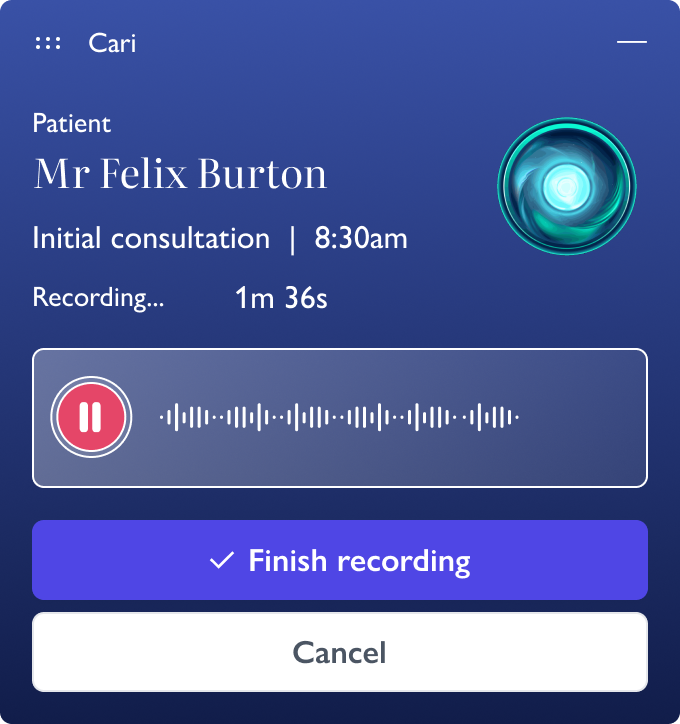

After launching at Carebit, things went wrong. Clinicians stopped using it. The interface was confusing. People couldn't figure out how to use it properly. The tool felt unreliable, even if it wasn't breaking things.

When clinicians don't understand how a tool works, they don't trust it. And in healthcare, trust matters. If you're not confident in what the AI is doing, you won't use it.

This case study covers how I redesigned Cari AI from the ground up. I had to simplify the experience, make it intuitive, and prove to clinicians that it actually worked.

The result: Over 1,500 clinicians now use it across nearly 1,000 organisations. It's taken a significant admin burden off their plates. Hours of note-taking that used to eat into their day are now automated. That time goes back where it belongs, with patients.

Lloyds Banking Group had to meet new security rules. They needed an authentication pattern that would work across everything, login, payments, and online shopping.

The challenge was designing something that could scale. One pattern, multiple uses.But it had to be simple enough for everyone to use. That meant customers with low digital confidence, non-native English speakers, and people who'd never done this before.

I ran multiple rounds of user testing. I watched where people got stuck. Identified which instructions caused confusion. Then they redesigned those parts. Each iteration fixed specific problems the team observed. Tested again, found new issues, and refined further. This cycle continued until the pattern worked for their most vulnerable users, not just the easiest one.

The results: 59% of customers used app-based authentication. 99% success rate across 31 million authentications.This case study shows how I designed it.

Pizza Hut UK had problems. Customers weren't coming back as often. Competition from delivery apps was tough. And their loyalty program was old and boring, people didn't use it.

They also wanted to expand globally. But first, they needed to fix the experience at home and build something that could work in other markets.

I was the Lead Product Designer on this project. I led the research to understand what wasn't working. I also mentored a junior designer through the process.

I redesigned the loyalty program to make it more personal and easier to use across different channels.

Three months after launch in the UK:

Customer retention went up 25%

Average order value increased 15%

Loyalty program engagement improved 12%

The results gave Pizza Hut a working model they could take to other countries.

Amazon wanted to sell insurance online. The idea wassimple: let people shop for home insurance the same way they buy everything else on Amazon. But we had a problem. 95% of people who landed on the page left immediately.

I figured out why they were leaving. The landing page had three confusing buttons with insurance jargon. People didn't know which one to click, so they just gave up. I simplified it down to one clear button, moved the insurance type selection to a separate page, and used pictures to explain the options. After two weeks of testing, we increased conversions by 7%.

Upon completing the research phase, I synthesised the collected data to create a suite of research-driven deliverables:

By grounding these deliverables in solid research, we ensured that our design decisions were based on real user needs and behaviours rather than assumptions. This approach improved the likelihood of creating a successful product and provided a strong foundation for justifying design choices to stakeholders.

Throughout the process, I maintained open lines of communication with the Product Manager and critical stakeholders, regularly presenting research findings and design concepts for feedback. This iterative approach allowed us to refine our understanding of user needs and adjust our design direction as necessary, ultimately leading to a more robust and user-centred trading platform.

Next, I embarked on creating low—and high-fidelity wireframes, a critical step in the design process. The low-fidelity wireframes allowed me to quickly sketch out basic layouts and functionalities, focusing on the overall structure and user flow without getting bogged down in visual details. This stage was crucial for rapid iteration and gathering initial feedback.

Following this, I developed high-fidelity wireframes, which provided a much more detailed and visually accurate representation of the final product. These wireframes encompassed many complex elements, from intricate trading blotters to sophisticated back-office input forms. The high-fidelity wireframes were the foundation for creating interactive prototypes, allowing stakeholders and potential users to get a tangible feel for the product before any actual development began.

Once the designs were finalised, I crafted a comprehensive discussion guide. This document was instrumental in structuring the upcoming research sessions, ensuring they would be productive and yield the specific insights we sought. The guide included carefully crafted questions and scenarios to uncover user preferences, pain points, and workflow patterns. Additionally, it addressed features the product manager had requested, which I suspected might be unnecessary. This provided an excellent opportunity to validate or challenge these assumptions through direct user feedback.

The qualitative research was structured into two rounds of seven sessions each, with each session lasting between 45 minutes to an hour. This design allowed for an initial round of testing followed by a week-long period for design iteration based on the first round's feedback. The second round then served to validate these iterations. The research participants were carefully selected to represent a cross-section of end-users, including traders and back-office staff. This diverse group ensured we could test the application across various use cases and scenarios, including typical workflows (happy paths) and error states (unhappy paths).

The first round of sessions proved to be highly successful. We observed all user groups effectively navigating and utilising the new, improved applications. The positive response from the traders, a group known for their high standards and critical feedback, was particularly noteworthy. Their enthusiasm for the new design was a significant win. Similarly, the back-office staff expressed great satisfaction with the streamlined processes, eliminating redundant data entry. This improvement increased efficiency and reduced the potential for errors, allowing them to complete tasks more quickly and accurately.

Despite the overall success, we also gathered valuable insights for further improvements. I identified several areas where the user experience could be enhanced through careful observation and user feedback. Importantly, I also confirmed that certain features the product owner requested were unnecessary, as users either didn't use them or needed clarification. This evidence-based approach allowed me to confidently recommend removing these features in the next iteration, streamlining the interface and reducing development costs.

Following the initial round, I implemented the suggested improvements and conducted a second round of user testing. This iteration was crucial to ensure the changes addressed the previously observed issues without introducing new problems. I was pleased that the tweaks were effective, and the refined design was ready to be handed to the development team for production.

In addition to the functional design, I produced detailed UI designs and a comprehensive style guide. This documentation meticulously outlined every feature and application asset for the UI Developer. The style guide covered typography, colour palettes, button styles, form elements, and other UI components, ensuring consistency across the application. Moreover, this documentation serves as the foundation for a design system that can be used for future iterations and expansions of the application, promoting coherence and efficiency in ongoing development.

After finalising our designs, we initiated the crucial handover process to the developer feature team. This transition marked a significant milestone in our project, moving from conceptual design to practical implementation. I took a proactive approach in this phase, working closely and collaboratively with the development team to ensure a smooth transfer of knowledge and design intent.

During this period, I maintained constant communication with the developers, scheduling regular check-ins and design review sessions. These meetings served multiple purposes: they allowed me to clarify design decisions, explain the rationale behind specific user interface choices, and address any technical constraints or implementation challenges that arose. I also provided detailed annotations on our design files, outlining interactions, responsive behaviours, and edge cases to minimise ambiguity.

As questions and queries inevitably surfaced during the development process, I made myself readily available to the team. This open-door policy facilitated quick resolution of issues, preventing potential bottlenecks in the development timeline. I also participated in code reviews from a design perspective, ensuring that the implemented features accurately reflected the intended user experience.

Once we had a working codebase, I arranged comprehensive accessibility testing. This critical step aimed to evaluate the product's usability across a broad spectrum of accessibility needs. Our testing covered various aspects, including:

Visual impairments: Testing with screen readers, ensuring proper colour contrast, and verifying text scalability.

Motor impairments: Evaluating keyboard navigation and ensuring that interactive elements were easily targetable.

Cognitive impairments: Assessing the clarity of instructions, consistency of layout, and overall ease of use.

We engaged automated testing tools and manual testers with various accessibility needs for a holistic view of our product's accessibility. The insights gathered from these tests were then prioritised and incorporated into our development backlog.

I closely monitored the product's performance through targeted testing sessions as we approached the launch. These tests were designed to simulate real-world usage scenarios, allowing us to identify and address performance bottlenecks or usability issues. I worked with the team to prioritise features and optimisations aligned most closely with our users' primary intentions and needs. This user-centric approach ensured we delivered maximum value with our initial launch while setting the stage for future iterations.

Simultaneously, I initiated early collaboration with the LBG (Lloyds Banking Group) communications team. This partnership was crucial for several reasons:

This early and close collaboration with the communications team ensured that our product launch was a technical success and effectively positioned and communicated to our target audience. It allowed us to create a cohesive narrative around the product, from its conception to its release, enhancing its reception and adoption among users.

Throughout this final phase, I maintained a flexible approach, ready to make last-minute adjustments based on feedback or unforeseen challenges. This adaptability, combined with our thorough preparation and cross-functional collaboration, set the stage for a successful product launch.

Increased User Productivity: The new system design significantly boosted user productivity across various roles. We streamlined the trading blotter interface for traders, allowing for quicker execution of trades and more efficient management of positions. This was achieved through:

We redesigned input forms and workflows for back-office staff to minimise redundant data entry. This was accomplished by:

We conducted time-motion studies before and after implementation, which showed an average reduction in task completion time of 27% across all user groups. This translated to approximately 2.5 hours saved per user per day, allowing staff to focus on more value-added activities.

Leveraged Existing APIs to Reduce User Input: We identified several opportunities to integrate existing internal and external APIs to automate data population and reduce manual input. This initiative involved:

Leveraging these APIs reduced manual data entry by approximately 60%, saving time and significantly reducing the potential for human error. The system now automatically pulls and updates information from 12 different internal and external sources, ensuring data accuracy and timeliness.

Increased User Satisfaction: User satisfaction saw a marked improvement, as evidenced by both quantitative and qualitative feedback:

Key factors contributing to increased satisfaction included:

Significantly Reduced Support Costs for the Bank: The transition from a third-party-provided solution to an in-house system resulted in substantial cost savings:

We conducted a comprehensive cost-benefit analysis showing that the new system would pay for its development costs within 18 months, primarily through eliminating third-party fees and reduced support costs. Over five years, the projected savings are estimated at £14 million, not including the value of increased productivity and reduced errors.

Furthermore, bringing the system in-house has given us greater flexibility to rapidly implement new features and respond to changing market conditions. This provides a strategic advantage over competitors relying on less flexible third-party solutions.

This combination of increased productivity, leveraged APIs, higher user satisfaction, and reduced costs has positioned the new system as a significant success from both a user and a business standpoint. It has set a new standard for internal tools within the organisation and has become a model for future development projects.

The redesigned customer and loyalty experience deliveredsignificant measurable improvements within three months of UK launch:

The design proved security and usability can work together when you build trust into every detail. By focusing on clarity, accessibility, and iteration, we turned a regulatory requirement into something that actually worked for millions of customers.

What I Learned:

- Visual guidance cuts mental effort.

- Custom animations were essential for cross-device flows.

- Copy is design. Working with copywriters improved understanding dramatically.

- Start small, then scale. Testing with a subset first let us iterate quickly.

- Give people options. Multiple verification methods built trust and worked for different needs.

- Design for problems. Despite push notification delays, clear visuals and text kept it working.

- Track everything. Good analytics specs gave us data to keep improving.

The project delivered comprehensive proof-of-concept prototypes and strategic recommendations for Amazon's board:

The redesign worked. But more important, it showed that you can rebuild customer loyalty if you make things simple and personal.

Key things:

• Simple wins. The new loyalty program was way easier to understand. More people used it.

• Rewards need to come fast. Small rewards often beat big rewards later.

• Personalization increases order value. People bought more when we suggested things they'd like.

• 400 survey responses gave us confidence. Good data helped convince stakeholders.

• Mentoring multiplies impact. Teaching the junior designer made both of us better.

• Launch what matters first. We cut features to hit the deadline. That was the right call.

• Connect everything. Web, mobile, in-store. If it's not consistent, people notice.

• Think global from the start. Built the system so it could work in other countries later.

Amazon's board decided not to move forward with Amazon Money. This happens in large companies. It's part of the job.The experience taught me that creating something isn't enough. You have to champion it through layers of evaluation. Product designers bridge the gap between innovation and business reality.

What I Learned:

- Test with customers early and often. It strengthens your case.

- Build on what already exists. The best opportunities fit into familiar places.

- Give people insights, not just data. That's what keeps them coming back.

- Automate the boring stuff, but let power users take control when they want.

- In financial products, be clear about data and security. Always.

- You need to advocate for your work. Decision-makers don't always see what you see.

- Each setback teaches you something. It gets easier to navigate these situations.

The redesign showed that trust and usability can't be separated in healthcare AI. You need both. By rebuilding from scratch and focusing on clarity and transparency, we turned a failing product into something doctors actually use.

Key things:

• When users are busy, find other ways to research - Support staff were helpful when doctors didn't have time.

• Trust needs to be designed in - Privacy messages, progress indicators, and review systems all mattered.

• Simple beats feature-rich - The three-step workflow was easier to understand than the old version.

• Start small - Rolling out to power users first let us fix problems early.

• Track everything - Analytics gave us visibility we never had before.

A 7% increase is good. But we still have work to do. The page is better, but it's not fixed.

There's still another problem to solve: do people even want insurance from Amazon? That's a bigger question. It'll take more than design changes. It needs the company to decide if they're serious about insurance.

What stuck with me: